By Anubhav Awasthi · March 4, 2026

You feel the pressure on every req. Fill fast, avoid risk, prove fairness, protect the brand. Traditional tools do not keep up. At the same time, every AI vendor promises magic. You need something simpler. You need AI recruitment transparency that you can explain to legal, operations, and frontline hiring managers in under five minutes.

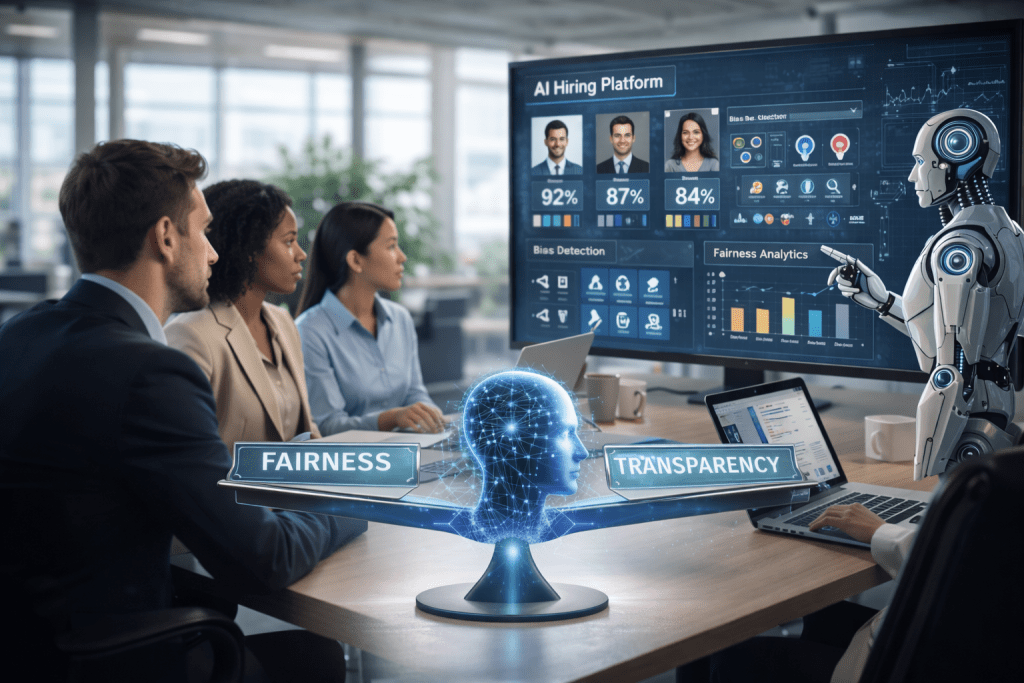

Fairness and speed should not be at odds. With the right design, AI can help you run more transparent recruitment processes, reduce bias, and give candidates a clear view of how you hire. The key is not more tech. The key is visible signal, clean rules, and accountable outcomes.

Identifying Common Sources of Bias in Traditional Hiring

Before you fix bias, you need to see where it hides in your current process. Traditional hiring stacks bias on top of bias, often without clear audit trails.

Subjective screening and gut feel

Recruiters often work through large applicant volumes under time pressure. That workload invites shortcuts. Studies show that unstructured interviews have weak predictive power for job performance compared to structured methods, sometimes explaining less than 10 percent of performance variance. Gut feel fills the gaps, and gut feel reflects personal preference more than job fit.

When every recruiter uses different mental models, you get different outcomes for similar candidates. Those inconsistencies are almost impossible to document or defend.

Resume-based filters that amplify inequality

Most high volume teams still lean hard on resumes and quick scans of education, gaps, and brand name employers. That approach bakes in historic inequality. Research shows applicant name alone can affect callbacks, with some studies finding roughly a 50 percent gap between white sounding and African American sounding names with identical credentials.

If your process gives heavy weight to school pedigree, previous brands, or continuous employment, you have bias hiding inside criteria that look neutral on paper.

Inconsistent application of policies

You probably have written guidelines for fair candidate screening. In reality, policies break at scale. Managers skip questions. Recruiters adjust thresholds to hit fill targets. Calm rules on slide decks turn into noisy reality on the store floor or in the distribution center.

Auditability suffers. You struggle to reconstruct why a candidate moved forward, why someone stalled, or why a manager overruled a screen. That opacity raises risk with regulators and with your own workforce.

Also Read: Understanding Ethical AI in Recruitment

How AI Can Enhance Fairness in Hiring

Ethical AI in hiring is not about handing decisions to an algorithm. It is about using structured data and tested models to remove guesswork and reveal signal. AI hiring fairness starts from design, not from a marketing label.

Standardizing decisions with structured criteria

A strong system for AI recruitment transparency converts your hiring model into clear, job related variables. Instead of scanning resumes, you define a set of skills, experiences, and behavioral signals that link to outcomes like retention and performance.

For example, Cadient SmartMatch™ and SmartScore™ focus on role specific predictors such as schedule fit, tenure patterns, and relevant task exposure. Those variables link directly to quality of hire, not to noisy proxies like school prestige. Every candidate receives the same scoring logic, which reduces random variation across recruiters and locations.

Using data to target retention, not bias

You feel the cost of turnover more than anyone. The Bureau of Labor Statistics reports that annual total separations in retail trade often sit near 60 percent. Every bad hire multiplies recruiting cost, training time, and lost productivity. Ethical AI in hiring can reduce this churn if it optimizes on retention signals that stay within fair and compliant limits.

SmartTenure™ looks at historical tenure outcomes and flags patterns tied to early exits. It does not need to use protected attributes to see who tends to stay. That focus supports AI hiring fairness by rewarding candidates who are likely to succeed based on behavior and fit to the role environment, not on identity.

Reducing human bias without removing human control

Bias free recruitment software should keep humans in charge while removing avoidable blind spots. In practice, that means:

• AI assists with ranking and shortlisting based on defined criteria.

• Recruiters see clear scores and drivers, not a black box label.

• Managers can review and override with notes tied to policy.

When AI handles initial screening, your team spends more time on fair candidate screening and coaching hiring managers and less time on manual resume triage that invites bias.

Importance of Transparency in AI Recruitment Processes

You do not only need fairness. You need proof of fairness. AI recruitment transparency turns your hiring stack into something that regulators, candidates, and business leaders can understand without a PhD in data science.

Building trust with candidates and employees

Candidates expect clarity about how you use their data. A global survey found that 85 percent of consumers want companies to be transparent about AI decisions. Employees ask similar questions when they see automated assessments enter the process.

Transparent recruitment processes answer those questions in plain language. You can share which data points your system uses, how long you store them, and how candidates can request review. This posture reduces suspicion, supports your employer brand, and strengthens internal trust with HR, DEI, and legal.

Meeting regulatory expectations

Regulators have started to tighten controls on automated hiring decisions. The New York City bias audit law requires employers using automated employment decision tools to complete annual bias audits and share summary results. Similar efforts are under discussion in multiple states and at the federal level.

Ethical AI in hiring prepares you for this shift. You can trace why a model produced a certain score, which features influenced that score, and how often those outcomes differ across demographic groups. When your AI vendor cannot provide this line of sight, you take on hidden risk.

Making performance tradeoffs visible

Transparency is not only legal. It is operational. You face constant tradeoffs between time to fill, quality of hire, and labor cost. Research from McKinsey links top quartile talent practices to roughly 2.3 times higher EBITDA performance. That impact depends on strong hiring decisions, not fast guesses.

Visible AI models let you see how changes to score thresholds affect funnel size, diversity outcomes, and projected tenure. You can tune your hiring engine with accurate tradeoffs instead of guesses or political pressure.

Also Read: How Explainable AI Builds Trust in Hiring Decisions

Best Practices for Implementing Bias Free AI Hiring

You do not fix bias by buying a tool with a friendly user interface. You fix it with discipline in how you design, deploy, and monitor AI across the hiring lifecycle.

Start with a clear problem and measurable outcomes

Define the outcome you want before you select tech. For example:

• Reduce 90 day turnover by 15 percent in hourly roles.

• Cut average time to fill from 25 days to 15 days without gaps in diversity metrics.

• Increase interview to hire conversion while holding quality of hire steady.

Tie AI hiring fairness to those outcomes. With SmartSuite™, you can track how SmartMatch™, SmartScore™, and SmartTenure™ influence retention, productivity, and time to fill by location and role.

Use validated, job related features

Bias free recruitment software must rely on predictors that connect to real job performance. That means:

• Behavioral questions tied to role demands.

• Work history aligned with similar tasks or environments.

• Schedule flexibility where that matters for coverage.

Do not connect models to sensitive attributes, or to noisy proxies like zip code or school rank. Validate models with back testing and ongoing monitoring. Academic work shows that structured methods and cognitive and work sample tests predict performance more accurately than unstructured interviews, sometimes doubling predictive validity from roughly 0.2 to 0.5 correlation. Use that science in your feature design.

Build human review and clear override paths

AI should narrow the field, not close the door. Good practice includes:

• Letting recruiters view score details and top drivers.

• Allowing overrides with documented business reasons.

• Auditing overrides to identify patterns by manager or region.

This design keeps accountability with humans while still standardizing the bulk of fair candidate screening.

Monitor for drift and bias over time

Even strong models can drift as labor markets, job content, and sourcing channels change. Set up regular reviews that check:

• Score distributions by demographic group.

• Selection rates at each funnel stage.

• Retention and performance outcomes across groups.

SmartSuite™ reporting allows you to review these metrics at scale and show where SmartSource™, SmartTexting™, and SmartScreen™ might influence outcomes. You adjust before small gaps turn into big problems.

Real World Case Studies

Transparent recruitment processes are already in use across high volume employers. The most effective teams treat AI as part of an operating system, not as a side project.

Large retailer targeting 90 day retention

A national retailer with thousands of hourly hires each month struggled with store level turnover. HR knew turnover cost was high, but leaders saw speed as the main priority. Workload fell on recruiters who used quick resume screens and manager preferences.

The talent team partnered with Cadient to implement SmartSuite™ across stores. SmartMatch™ and SmartTenure™ scored applicants on fit and predicted tenure using structured questions, past role patterns, and availability data. Managers still made final decisions but started with ranked candidate lists and clear scoring drivers.

Within a year the company saw a reduction of double digit percentage points in 90 day attrition for roles using the new process, along with shorter time to fill. Recruiters spent less time sorting low fit applicants and more time coaching managers on interviews and offers. Fairness improved because each candidate passed through the same scoring logic, documented and auditable.

Multi unit hospitality group focused on fairness and compliance

A hospitality company operated in multiple jurisdictions with emerging rules on automated decision tools. Leadership wanted the efficiency of AI hiring but feared regulatory exposure and brand damage if candidates perceived the process as opaque.

Cadient deployed SmartSuite™ with strong AI recruitment transparency controls. Each role had a documented scoring rubric. HR and legal teams reviewed model features and approved them against internal policy. Candidates received clear language about how the system used their data and how to request human review.

After launch, the company tracked selection rate differences and audited model impact annually. Reporting showed consistent or improved diversity outcomes in early funnel stages and higher interview to hire conversion. The business gained confidence that ethical AI in hiring could support compliance, not threaten it.

Regional logistics provider improving screen consistency

A logistics provider managed dozens of sites, each with its own hiring habits. Some site managers screened aggressively. Others hired almost anyone to keep shifts covered. Turnover and safety incidents varied widely.

With Cadient SmartSuite™, the company standardised fair candidate screening for roles such as drivers, warehouse associates, and dispatchers. SmartScore™ weighted factors like shift preference, prior experience in physically demanding roles, and licensure data. SmartScreen™ ensured background checks and other compliance steps followed the same playbook across locations.

Over time, safety incidents declined and early attrition improved. Leaders finally had a shared view of quality of hire and a common language for performance in hiring, all supported by transparent AI models that sites could understand and trust.

Conclusion

AI on its own does not fix a broken hiring system. AI recruitment transparency, combined with clear outcomes and strong governance, helps you remove noise, raise fairness, and prove your process to every stakeholder who asks.

When you ground ethical AI in hiring in measurable results like time to fill, retention, and quality of hire, you protect your brand and your workforce. Bias free recruitment software becomes an operational advantage, not a compliance headache. You move from gut feel and hidden rules to visible signal, consistent scoring, and accountable decisions.

Cadient SmartSuite™, including SmartSource™, SmartMatch™, SmartScore™, SmartTenure™, SmartScreen™, and SmartTexting™, was built for intelligent high volume hiring. The platform helps you run transparent recruitment processes that hiring managers trust, candidates understand, and executives can defend.

If you are ready to replace guesswork with signal in your hiring, schedule a conversation with Cadient and see how AI driven, transparent recruitment can work in your operation.

FAQs

What is AI recruitment transparency?

AI recruitment transparency means you clearly explain how AI tools influence hiring decisions. You document which data you use, how models score candidates, and how humans review and approve final outcomes. The goal is understandable, auditable, and fair hiring, not black box automation.

How does AI support fair candidate screening?

AI supports fair candidate screening by applying the same job related criteria to every applicant. It ranks candidates based on structured data tied to success in the role, such as relevant experience, availability, or work preferences. Humans still make final calls, but they start from consistent signal rather than inconsistent gut feel.

Is ethical AI in hiring compliant with new regulations?

Ethical AI in hiring aligns with regulations when it avoids protected attributes, uses validated job related features, and includes ongoing bias audits. You also need transparency for candidates and regulators. Working with a partner that understands these expectations reduces your compliance risk.

What should I look for in bias free recruitment software?

Look for clear documentation of model features, robust reporting by demographic group, strong data security, and human override options. The system should integrate with your sourcing, assessments, and screening steps, so you can see end to end impact on diversity, retention, and time to fill.

How does Cadient support AI hiring fairness?

Cadient SmartSuite™ uses predictive models that focus on job related signals such as schedule fit, work history, and tenure patterns. Tools like SmartMatch™, SmartScore™, and SmartTenure™ help standardize decisions and reduce bias while keeping recruiters and managers in control. Reporting gives you a transparent view of model impact across your hiring funnel.