By Abhishek Patel · April 28, 2026

AI-driven recruitment isnt a shiny HR trend anymore. In 2026, its the difference between a hiring team that answers candidates in minutes and one that loses great people to faster competitors. And yes, you can absolutely do this without turning your process into a cold, automated mess.

Ive helped teams roll out everything from chatbots to interview intelligence, and Ive also watched AI projects crash because no one defined guardrails. So lets talk like grown-ups: what works, what breaks, what to buy, what to build, and how to keep candidates trusting you.

Well walk the whole funnel, show real scenarios, and give you templates competitors keep “strategically” vague about. Because you dont need hype. You need a playbook.

What Is AI-Driven Recruitment?

Definition and how it differs from traditional hiring

AI-driven recruitment is the use of machine learning and automation to assist recruiting work across sourcing, screening, scheduling, interviewing, and decision support. The key word is assist. If your system is making final hiring decisions on its own, youre not “advanced” youre exposed.

Traditional hiring is mostly manual: recruiters search, read resumes, chase managers for feedback, and send the same emails 200 times. AI changes the workflow. It turns repeatable tasks into software actions and turns messy data into suggestions you can verify.

But heres the honest truth: AI doesnt “find the best candidate.” It finds patterns based on the data and rules you give it. If those patterns are biased, outdated, or low-quality, the output will be too.

Where recruitment process automation fits

Recruitment process automation is the operational backbone. Think: moving candidates between stages, triggering emails, syncing calendars, pushing jobs to boards, updating your ATS, and logging activity. Its not always “AI” in the fancy sense, but it’s where most ROI shows up first.

So, how do I separate them? Automation runs the play. AI recommends the next best move. When you combine both, you get speed without chaos.

Also Read: AI Hiring Platforms vs Traditional ATS

How AI Is Used Across the Hiring Funnel

Job description creation and inclusive language

Most job descriptions are copy-pasted Franken-docs. AI helps you draft faster, but the bigger win is inclusive language and clarity. Tools can flag gender-coded words, unnecessary degree requirements, and vague “rockstar” nonsense that turns qualified people away.

One practical move: ask the model to generate two versions. Version A is “must-haves only.” Version B includes “nice-to-haves.” Then you and the hiring manager decide what truly belongs. Youd be shocked how often “10 years required” becomes “3 years preferred” after that conversation.

Programmatic job distribution and recruitment marketing

Programmatic job ads are basically performance marketing for hiring. AI can shift budget toward channels that produce applicants who actually pass screening, not just click “Apply.” And that matters because cheap applicants can be expensive noise.

In high-volume hourly hiring, Ive seen teams cut wasted spend by 15% to 30% simply by letting the system reallocate budget daily based on conversion rates. Not magic. Just measurement.

Candidate sourcing

Sourcing is where AI can feel like a superpower. Modern tools search across public profiles, your ATS, alumni lists, and internal talent marketplaces. They also help with internal mobility, which is still weirdly underused for most companies.

Real scenario: a retailer needed 40 assistant managers in 60 days. External sourcing was slow and expensive. We used AI search across internal performance data and skills tags from learning platforms, then routed candidates to recruiters with a simple “warm intro” message. The fill rate jumped, and attrition dropped later because internal hires already knew the culture.

But dont confuse “more reach” with “better fit.” AI expands the top of funnel. You still need structured evaluation to protect quality.

Resume parsing, screening and skills matching

Resume parsing is table stakes now. The real battleground is skills matching vs keyword screening. Keyword screening is lazy and brittle. Skills matching tries to infer capability based on evidence: projects, certifications, portfolios, and comparable roles.

Now, is it perfect? Nope. If your job requires “customer de-escalation,” the model might overvalue call-center titles and undervalue hospitality experience. Thats why you need calibration: review false positives and false negatives every week during rollout.

One trick I like: build a “skills rubric” with 8 to 12 skills max per role family. Then train recruiters and hiring managers to score consistently. AI can help rank candidates against that rubric, but it should never be the rubric.

Candidate engagement

Candidate engagement is where you win trust or lose it. Chatbots and SMS automation can answer FAQs, collect availability, confirm interest, and nudge incomplete applications. And yes, candidates actually like fast answers.

But the tone matters. A bot that sounds like a legal memo will tank your brand. Make it human. Make it helpful. And always provide a path to a person within one step.

Example: “Want me to connect you with a recruiter?” should be a default option, not a hidden escape hatch.

Scheduling and coordination

Scheduling is the silent killer of time-to-hire. AI schedulers can coordinate across time zones, panel availability, and interview formats, then auto-send confirmations and reminders. This alone can shave days off your funnel.

If youre still doing scheduling through email threads, youre paying recruiter salaries for calendar Tetris. Thats not a strategy.

Interview support

Interview intelligence tools can generate question guides, capture notes, and produce summaries. Used well, they reduce bias because they push structure: consistent questions, consistent scoring, consistent documentation.

Used badly, they become surveillance. So set rules. Tell candidates what’s recorded, what’s transcribed, and how it’s used. And dont let “AI summary” replace actual interviewer accountability.

One practical workflow: the system drafts a summary, the interviewer must edit and confirm it, and the hiring manager sees both the structured scorecard and the edited notes. Clean. Defensible. Human.

Fit, engagement scoring and prioritization

Scoring can help triage, especially when you get 1,000 applicants for one role. But scoring is also where risk spikes. “Fit” can become a proxy for sameness. Thats how teams accidentally optimize for people who look like last years hires.

So, I prefer scoring that focuses on job-relevant skills, availability, location, and role requirements. Keep “culture fit” out of the model. If you want culture, define behaviors and measure them consistently in structured interviews.

Also: monitor model drift. If your business changes, your hiring patterns change. Your model will quietly get worse unless you review it on a cadence.

Offer, onboarding handoff, and internal mobility

AI can help generate offer letters, flag comp outliers, and predict offer acceptance based on pipeline signals like response speed and competing-process mentions. Then it can hand off cleanly to onboarding so new hires dont fall into the classic black hole between “Congrats” and “Day 1.”

And internal mobility deserves a louder spotlight. When AI identifies internal candidates for open roles, you reduce time-to-fill and keep people from leaving. Retention isnt just an HR metric. Its a recruiting strategy.

Benefits of AI-Driven Recruitment with metrics

Faster time-to-hire and recruiter productivity

The most common measurable win is speed. Teams that automate scheduling, screening triage, and candidate comms often cut time-to-fill by 20% to 40%, especially in high-volume roles. Is that always realistic? No. But it’s common when your baseline process is messy.

Recruiter productivity improves too. Ive seen a single recruiter manage 15 to 25% more requisitions without burning out, mainly because the system handles the repetitive chasing and sorting.

Speed matters because good candidates dont wait. They accept the first solid offer that feels respectful.

Better candidate experience and responsiveness

Candidate experience is mostly about responsiveness. People can forgive a “no.” They dont forgive silence.

With chatbots, SMS, and automated updates, you can respond in under 5 minutes for basic questions and within 24 hours for status updates. That alone can reduce application drop-off, especially for hourly roles where candidates apply to 10 jobs in a single sitting.

But dont over-automate. If a candidate asks a nuanced question and gets a canned answer three times, youve lost them.

Quality of hire and skills-based matching

Quality of hire is tricky because it’s lagging and messy. Still, AI helps by pushing skills-based hiring: matching candidates to what the job actually needs, not just where they worked last.

When you combine skills matching with structured interviews, you often see better pass-through rates from onsite to offer, and fewer “we hired them but… it’s not working” regrets. And those regrets are expensive. A bad hire can cost 30% of first-year earnings or more depending on role complexity and ramp time.

Cost efficiency and scalability

High-volume hiring is where the math gets loud. If you hire 5,000 hourly workers a year and cut recruiter admin time by even 10 minutes per candidate, youre talking about hundreds of hours back. That’s real money and real sanity.

Programmatic ads reduce wasted spend. Automated screening reduces agency dependence. And consistent workflows reduce rework, which is the sneakiest cost of all.

Risks, Ethics and Compliance

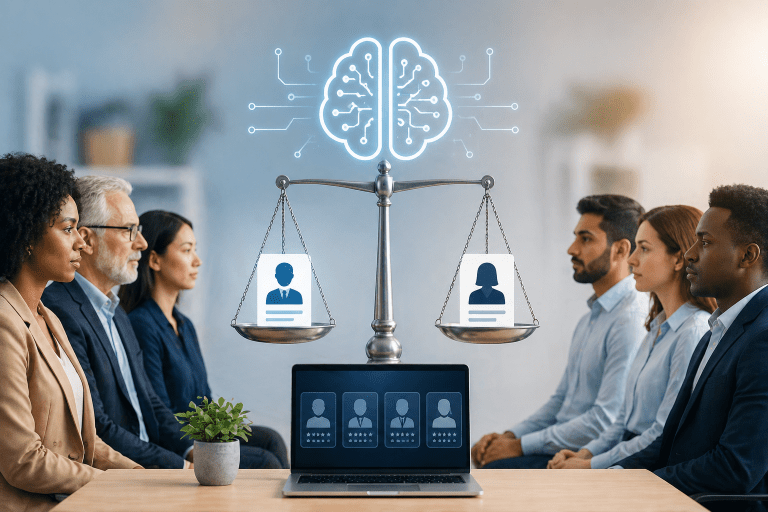

Bias, disparate impact, and model drift

Bias isnt just “bad intent.” Its often bad data. If your historic hiring favored certain schools, titles, or career paths, the model can learn that pattern and repeat it.

Disparate impact can show up quietly: one group advances at a lower rate even when qualifications are similar. You need to measure selection rates by stage and investigate gaps. And you need to do it continuously, not once a year when someone panics.

Model drift is the other issue. Your roles change. Your labor market changes. Your model keeps scoring like it’s 2024. So set a review cadence and retrain or recalibrate when performance drops.

Privacy, consent, data retention

If youre using AI tools, youre handling sensitive personal data at scale. Follow GDPR and CCPA-style principles even if youre not strictly required to. Why? Because its the right baseline, and regulators are getting less patient.

Keep data minimization front and center: collect what you need, store it for a defined period, and delete it on schedule. Also confirm where data is processed, whether vendors train models on your candidate data, and how they handle deletion requests.

Transparency and candidate communications

Candidates dont need a 12-page whitepaper. They need clear language: what youre doing, why youre doing it, and what choices they have.

If youre using automated screening, say so. If interviews are recorded or transcribed, say so. If a chatbot is not a human, dont pretend it is. People can smell that a mile away, and it backfires.

Human-in-the-loop decisioning

Human-in-the-loop means a person can review, override, and explain outcomes. It also means someone is accountable.

My rule: AI can recommend and rank. Humans decide. If your vendor pitches “fully automated hiring,” ask them how they handle bias audits, appeals, and explainability. Then watch them squirm.

AI Recruiting Tools Landscape

Core categories

The market is crowded, and vendors love fuzzy category names. I break AI recruiting tools into six buckets so you can compare apples to apples:

- ATS with AI features: parsing, matching, workflow automation, basic analytics.

- Sourcing platforms: intelligent search, talent pooling, outreach sequencing.

- Chatbots and candidate messaging: career site bots, SMS, email automation, FAQ flows.

- Assessments: skills tests, work simulations, structured scoring.

- Interview intelligence: guides, transcription, summaries, coaching insights.

- Analytics and fairness monitoring: funnel metrics, audit trails, compliance reporting.

Some suites cover multiple buckets. Others do one thing extremely well. Your job is to decide whether you want a suite, a stack, or a hybrid.

Key features checklist

If youre evaluating vendors, dont get hypnotized by demos. Ask for proof. Here’s the checklist I keep coming back to:

- Integrations with your ATS, HRIS, calendar, email, and identity provider.

- Audit logs that show who changed what, when, and why.

- Explainability you can share internally, and ideally externally in plain language.

- Security basics: SOC 2, encryption, access controls, data residency options.

- Data controls: retention settings, deletion workflows, model training opt-out.

- Bias testing support: built-in reporting or at least exportable data for your audits.

- Workflow configurability: can you adapt stages and rules without paying for custom work?

And ask this blunt question: “Show me how your tool fails.” If they cant answer, youre buying marketing.

How to Implement AI in Recruiting

Identify highest-ROI workflows to automate

Start with friction. Where do candidates stall? Where do recruiters waste time? Where do hiring managers slow everything down?

High-ROI starting points are usually:

- Scheduling automation for panels

- Screening triage for high-volume roles

- Candidate messaging and status updates

- Job ad optimization and distribution

But if your process is inconsistent, fix that first. Automating chaos just gives you faster chaos.

Data readiness and process standardization

AI is only as good as your data. If your ATS has missing stages, inconsistent reasons for rejection, and free-text notes that no one can interpret, your “insights” will be garbage.

Standardize your funnel stages. Define rejection reasons. Require structured scorecards. Then connect the dots between hiring outcomes and what happened in the process.

And dont skip feedback loops. If you never feed hiring outcomes back into your system, your matching will never improve.

Pilot design, stakeholder buy-in, change management

A pilot should be small enough to control and big enough to matter. Pick one role family, one region, and a clear success definition. Then run it for 30, 60, and 90 days.

Heres a simple 30/60/90-day pilot roadmap that actually works in the real world:

- Days 1 to 30: map the current funnel, set baseline metrics, configure workflows, train recruiters, and draft candidate disclosures.

- Days 31 to 60: go live with a limited req set, review weekly for false rejects and bottlenecks, and tune rules.

- Days 61 to 90: expand volume, add fairness reporting, document decisions, and decide whether to scale or stop.

Change management is where most teams stumble. Recruiters worry about being replaced. Hiring managers worry about losing control. So name it. Tell them what AI will do, what it wont, and how humans stay accountable.

Governance: policies, review cadence, vendor due diligence

Governance sounds boring until you need it. Then it’s everything.

Set policies for: what data is allowed, what models can be used, how often outputs are reviewed, and what happens when the system makes a bad call. Also define escalation paths. If a candidate challenges a decision, who responds?

Vendor due diligence should include security review, legal review, bias testing approach, and contract terms around data usage. If a vendor wants to train on your candidate data by default, pause and negotiate.

KPIs to Measure Success

Time-to-fill, time-in-stage, cost-per-hire

If you measure only time-to-fill, youll miss the real bottleneck. Track time-in-stage so you can see where candidates get stuck.

- Time-to-fill: role opened to accepted offer

- Time-in-stage: application to screen, screen to interview, interview to decision

- Cost-per-hire: ads, tools, agency fees, recruiter time estimates

When AI is working, you should see fewer stalls and fewer last-minute scrambles.

Source quality, pass-through rates, quality-of-hire proxies

Source quality is about downstream outcomes, not applicant volume. Track pass-through rates from stage to stage by source. That’s where programmatic distribution and sourcing tools either prove value or get exposed.

For quality-of-hire proxies, dont overcomplicate it. Start with:

- Hiring manager satisfaction at 30 and 90 days

- New hire ramp milestones

- Early attrition at 90 and 180 days

Are these perfect? No. Are they better than vibes? Absolutely.

Candidate NPS and response time and drop-off

Candidate NPS or CSAT is useful if you keep it simple and consistent. Ask one question after key stages, not a 20-question survey no one answers.

Also track:

- First response time after application

- Interview scheduling time

- Drop-off rate from application start to submission

If your chatbot increases speed but drop-off rises, your flow is probably annoying or confusing.

Fairness metrics and audit reporting

You need fairness metrics by stage: application to screen, screen to interview, interview to offer. Look for selection rate differences across protected classes where legally permissible and where you have consent and appropriate handling.

At minimum, maintain audit reporting that shows:

- Model version and configuration at the time of decision support

- Inputs used for ranking or recommendations

- Human overrides and rationale

- Monthly or quarterly fairness checks

If you cant audit it, you cant defend it.

Best Practices for Responsible AI-Driven Recruitment

Skills-first hiring and structured interviews

If you want AI to improve hiring, move to skills-first hiring. That means defining skills, measuring skills, and reducing reliance on pedigree signals like school names or brand-name employers.

Structured interviews are the other half. Same questions, same scoring rubric, same evidence standards. AI can help generate question banks, but you decide what “good” looks like.

And yes, this takes discipline. But it pays off because it reduces bias and increases consistency.

Bias testing, monitoring, and documentation

Bias testing isnt a one-time checkbox. Its a habit.

Document:

- What the tool does and does not do

- What data it uses

- How you tested it before launch

- How you monitor it after launch

Now, the uncomfortable part: if you find bias, you may need to pause automation, adjust thresholds, retrain, or remove certain features. Thats not failure. Thats responsible operations.

Candidate experience safeguards

Candidate experience safeguards are simple rules that prevent “automation harm.” Here are the ones I recommend:

- Always offer a human contact path within one step

- Dont auto-reject based only on opaque scoring

- Keep messages honest: bots should identify themselves

- Limit reminders: no one wants 7 texts in 24 hours

Respect is a feature. Treat it like one.

AI Governance for Recruiting template

This is one of the biggest gaps I see. Companies buy tools, but no one owns the system end-to-end. Then something goes wrong, and everyone points at everyone else.

Here’s a practical governance template you can copy and adapt. Keep it lightweight, but real.

Roles and responsibilities

- Executive sponsor: sets risk tolerance, approves budget, resolves conflicts

- Recruiting operations owner: owns process design, configuration, and day-to-day performance

- HR legal or compliance: reviews disclosures, consent, retention, and regulatory alignment

- Security and IT: vendor security review, access controls, integration approvals

- DEI or people analytics: fairness metrics, disparate impact monitoring, documentation

- Hiring manager council: feedback on workflow usability and quality signals

- Vendor manager: contract terms, SLAs, incident handling, roadmap alignment

RACI you can actually run

Keep a simple RACI for the core decisions:

- Tool selection: Responsible recruiting ops, Accountable executive sponsor, Consulted legal, security, DEI, Informed hiring leaders

- Model configuration and scoring rules: Responsible recruiting ops, Accountable people analytics, Consulted DEI and legal, Informed recruiters

- Candidate disclosures: Responsible legal, Accountable HR leadership, Consulted recruiting ops, Informed candidates and hiring managers

- Fairness audits: Responsible people analytics, Accountable DEI leader, Consulted legal, Informed executive sponsor

Approval workflow and audit cadence

Set an approval workflow for changes. No “quick tweaks” in production without a record.

- Pre-launch: baseline metrics, bias testing plan, disclosure copy, security sign-off

- Weekly for first 8 weeks: review false rejects, candidate complaints, stage conversion changes

- Monthly: fairness metrics by stage, model performance checks, sampling of human overrides

- Quarterly: vendor review, incident review, process updates, retraining decisions

This cadence sounds like work because it is. But it beats explaining to leadership why your “efficiency tool” created a compliance fire drill.

Build vs Buy vs Embed in ATS decision framework

Teams ask me this all the time: should we build AI ourselves, buy a point solution, or just turn on what our ATS already offers? The right answer depends on your scale, risk tolerance, and integration maturity.

When to embed in your ATS

Choose ATS-native AI when you need speed and simplicity. It’s usually “good enough” for parsing, basic matching, workflow automation, and reporting.

But be honest: ATS AI can be shallow. If you need advanced sourcing, deep interview intelligence, or sophisticated fairness monitoring, you may outgrow it fast.

When to buy point solutions

Buy when you need best-in-class capability in one stage, like sourcing or interview intelligence. This is common in technical hiring where the funnel is expensive and every improvement matters.

The catch is integration. If your tools dont share data cleanly, you create a fragmented candidate record. Recruiters hate that. Candidates feel it too.

When to build

Build is for organizations with strong data teams, unique workflows, and the appetite to maintain models over time. If you build, youre also signing up for monitoring, retraining, documentation, and security reviews forever. Not just launch week.

So ask yourself: do we want a product, or do we want a project?

Integration architecture diagram description

If I were drawing your integration architecture on a whiteboard, it would look like this:

- System of record: ATS holds candidate profile, stages, disposition reasons, and offer outcomes.

- Engagement layer: chatbot and messaging platform writes interaction logs back to ATS.

- Scheduling layer: calendar integration plus scheduling tool updates interview events in ATS.

- Interview layer: interview intelligence tool stores recordings and summaries, pushes structured scorecards to ATS.

- Assessment layer: skills platform sends scores and pass-fail outcomes to ATS.

- Analytics layer: data warehouse pulls from ATS and tools, generates KPI dashboards and fairness reporting.

- Identity and security: SSO controls access, audit logs feed into your security monitoring.

The goal is simple: one candidate truth, minimal manual entry, and auditability end-to-end.

Also Read: Benefits of AI Candidate Matching for Enterprise Hiring

Candidate Trust Pack

Most companies talk about transparency and then publish vague fluff. Candidates deserve better. And frankly, you deserve fewer complaints and fewer misunderstandings.

Sample disclosure language for career sites

Here’s a plain-language disclosure you can adapt:

We use automated tools to help manage applications, such as routing candidates to the right roles, scheduling interviews, and summarizing interview notes. These tools support our team, but hiring decisions are made by people. You can request accommodations or ask questions at any time by contacting our recruiting team.

Sample consent copy

Keep consent clear and specific:

By submitting your application, you agree that we may process your information to evaluate your candidacy, communicate with you, and improve our hiring process. Where required, we will ask for additional consent before recording or transcribing interviews. You can request access to or deletion of your data according to our privacy policy.

FAQ snippet you can paste into your careers page

- Do you use AI in hiring? Yes, we use automated tools for tasks like scheduling and application routing. Our hiring team makes the final decisions.

- Will AI reject me automatically? We may use automated screening to prioritize applications, but we also review candidates through structured steps and human oversight.

- Is my interview recorded? If an interview is recorded or transcribed, we will tell you in advance and explain how it will be used.

- How do I request accommodations? Contact our recruiting team and we’ll support you through the process.

This is not just compliance. It’s brand. People remember how you treat them when you have power.

FAQs

Is AI replacing recruiters?

No. It’s replacing parts of the job recruiters never enjoyed: scheduling, repetitive messaging, first-pass sorting, and reporting. The human work is still the hard work: role definition, stakeholder alignment, candidate assessment, closing, and building trust.

But recruiting roles will change. Recruiters who learn how to run AI-assisted workflows will outperform recruiters who insist on doing everything manually. Thats just reality.

Can AI legally screen candidates?

Often yes, but “legal” depends on where you operate, what the tool does, what data it uses, and how decisions are made. The safest posture is human-in-the-loop, clear disclosures, documented testing, and ongoing monitoring for disparate impact.

If a vendor promises you dont need to worry about compliance because “our AI is fair,” thats not a promise. Thats a red flag.

What data should and shouldnt be used?

Use data that is job-relevant and defensible: skills evidence, work history, certifications, assessment results, structured interview scores, and availability constraints where applicable.

Avoid sensitive attributes and proxies that can recreate them. Also be cautious with scraped social data, personality inferences, and anything that feels like “vibe scoring.” If you cant explain why it matters for the job, dont use it.

How to explain AI decisions to candidates?

Explain the process, not the math. Tell candidates what the tool does, what humans do, and how they can ask questions or request accommodations.

When candidates are rejected, you can share general guidance like “we assessed skills alignment and role requirements” without pretending you can provide a precise algorithmic breakdown. The goal is clarity and respect, not technical theater.

AI-driven recruitment in 2026 is not about replacing humans. It’s about building a faster, more consistent, more measurable hiring engine without sacrificing fairness or candidate trust.

So start where ROI is obvious: scheduling, messaging, distribution, and screening triage. Then mature into skills-based matching, structured interviews, and analytics that actually change decisions. Wrap it all in governance, because without governance youre just hoping nothing goes wrong.

If you take one thing from this guide, let it be this: automation should earn trust, not spend it. Build your process so candidates feel respected, recruiters feel supported, and leaders can see the numbers. Thats how you scale hiring in 2026 without losing your soul.